Automation represents a powerful resource in the materials characterization toolkit in its more general form.

Automation can positively impact the science performed in an array of laboratories, speeding up and streamlining processes by increasing the overall productivity of instruments, increasing the number of samples being run in a day, or – most crucially - increasing the day-to-day data density and improving the overall statistical relevance of results.

Automated procedures and recipes can run instruments 24 hours a day, seven days a week. Automation also improves the overall accessibility of the instruments – an often-overlooked consideration that can be key to developing and refining operators’ skills at all levels.

Automation also helps ensure consistent experimental procedures, which ultimately drives the precision of results and improves confidence in outcomes – a key consideration where experiments must be repeated across a range of samples or over an extended timeframe.

Scripting is one such means of automating imaging, analysis, data collection, and processing. This recent interview with Ruud Bernsen from Thermo Fisher Scientific explores some of the current research into the use of scripting to automate, empower and improve the reliability of desktop scanning electron microscopes (SEMs).

How has SEM technology, and particularly its workflows, evolved over time?

In the beginning, working with SEMs was primarily manual work. Image parameters had to be set manually, and this had to be done for each individual image.

This changed with new digital technology and the advent of the desktop SEM. Electron microscopes became smaller, easier to use, and faster. This technology has since become fully digital, and it became possible to use a fully digital user interface to operate the system.

There was still a need to gather the data yourself, however. For example, you had to look for your area of interest, take an image, save it and analyze it using a series of manual processes. These days, we can also automate this data acquisition step.

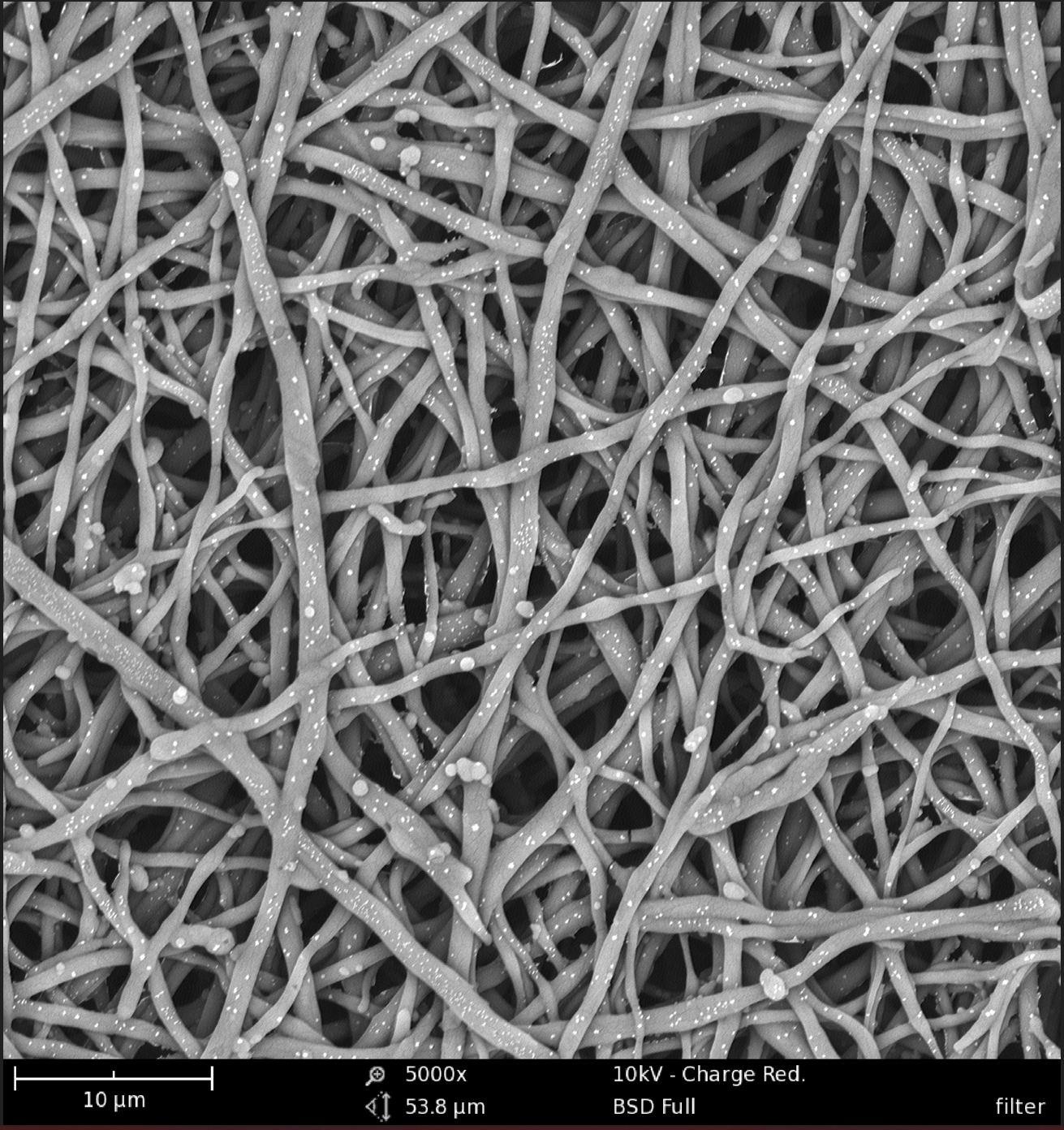

Image Credit: Filter00006: Meltblown filter material. Fiber thickness measurements can be easily automated for such filters. How? Ask one of Thermo Fisher Scientific Experts.

What would a typical automation workflow look like for an SEM-based application? Can you provide a working example?

To illustrate this concept, I will discuss an experiment where we are undertaking quality control of electrospun nanofibers for a medical application that requires stringent quality control.

We have to precisely adhere to the protocol and not change it. This involves measuring the fibers’ thickness, orientation, and the area covered by the fibers. We have to inspect 20 samples each day. Each sample has to be analyzed, and 50 images per sample have to be gathered to acquire sufficient statistical data.

Each image takes approximately 20 seconds to acquire by hand, comparatively much longer than this would take using an automated process. This means moving to a random area, which is already hard for a human, setting the focus, changing the contrast and brightness, if necessary, and storing the image. This is quite tedious work, especially when doing this for five and a half hours each day. Tasks like this we can automate using the PhenomTM Programming Interface or PPI for short. With PPI, users can write scripts in the programming languages Python or C#. With scripting the user can take full control of the Phenom in an automated way. The Phenom Pharos desktop SEM can complete this task in around 7 seconds.

Where does scripting fit into this workflow, and how can it benefit these automated processes?

Let me first explain what scripting is. The user interface has easy-to-operate buttons, but what we don’t see as users is what happens behind the screens. Each time the user presses a button, the software sends a command to the Phenom, and the Phenom executes that command. For example, there is the Phenom unload button that tells the Phenom to unload the sample holder when pressed. This goes for any button in the user interface. Luckily, there is no need to enter these codes manually – the user can press the button. A script is a set of these commands executed one after another. But instead of pushing all the buttons manually I can just run the script and everything is done automatically.

Now where does the script fit in? The script can perform the image gathering and analyses. The user only has to insert the samples and run the script. The script will take control of the Phenom, gather the images and analyze the images automatically. When completed the user can review the results and load the next batch.

In this case the script saves the user valuable time. The user is free to perform other tasks while the script is running and only has to review the data. Not only does this save the operator valuable time, but it also ensures a standard way of working and reporting that is fully customizable to the protocol or process in question. It also enables easy operation of the SEM.

The Phenom system uses the Phenom Program Interface (PPI) platform – an Application Programming Interface (API). This is an externally accessible interface. An operator can give commands to the system from another source using a script, for example, to control the column, stage, or scanning in the SEM.

A script can be written in a programming language, and PPI can be written in Python or C#. PPI can be understood as the list of commands connecting the work PC via network to the Phenom, enabling users to send those commands. An interface executes the command and tells the system’s column, stage, or another part to execute that command when pressing a button. This can be done remotely – the user does not need to be present at the instrument to execute this.

For example, if a user wanted to write a simple script to gather 100 images at random locations on the sample, they would start by importing the correct modules to interface Phenom, including the necessary PPI commands and a random number generator. The script will then ask the user to type the sample name or batch number before determining the start position of the Phenom and a stage position.

The script would include a loop repeated 100 times. For each loop, we generate random X and Y coordinates for the stage and tell the Phenom stage to move to those random stage locations. Once at the stage location, we acquire an image in full HD around 16 times, averaging the acquired information and then saving that image into a file.

Acquiring images in this way takes just a few lines of code and gives us an automated process for image gathering.

There is no need to create a full user interface for a relatively simple script like this, and this is just one example application that highlights the benefits of automation. For example, using a looping image acquisition script like the one described means that there is no need for a researcher to spend precious time manually acquiring 100 images – the script can even be left to run overnight.

It is also important to note that manually acquiring large numbers of images will generally involve a degree of human error or operator bias, so the use of automation in this instance will help improve accuracy and ensure a more statistically robust sample.

Once you have started the user interface and gathered images, it is time to conduct image analysis and evaluation.

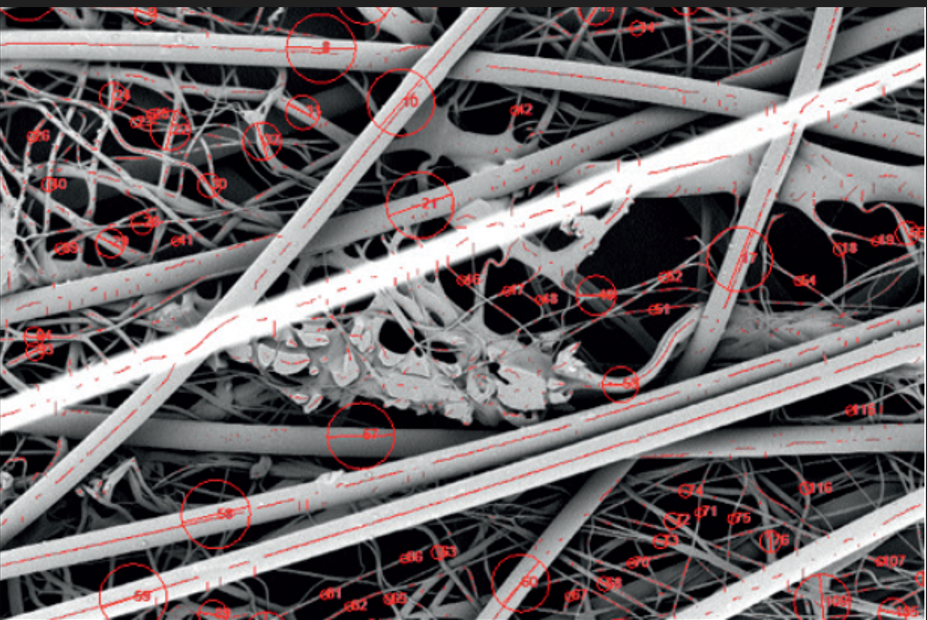

Image Credit: Fiber automation: Fiber thickness measurements can be done automatically with our Thermo ScientificTM Phenom FiberMetricTM Software

Can scripting also be used for image analysis?

Yes, Python includes many libraries that can be used to analyze images. It is possible to measure the fiber thickness and virtually any specifications required. If you have the time and expertise, it is also possible to write your own image analysis algorithm.

Once image analysis is complete, it is time to gather the results and generate a report. For example, you could use a report function to compare your image data to your specifications, lay this out in an accessible way and generate a PDF file with ‘okay’ or ‘not okay’ for each batch imaged.

What are users’ options if they do not have the time, expertise, or desire to develop their own automation scripts?

If users do not have any scripting experience or are simply too busy to write scripts, there are some other options available.

Thermo Fisher Scientific offers scripting as a service. We work alongside users to write a script, and we support them through the process. As part of this service, we set up the specifications and workflow, write the script, test it with the user, make any corrections required and deliver a fully functional script tailored to the user’s specific application.

Suppose users want to write a script themselves. In that case, we offer consultancy, can provide excellent example scripts, and offer a full manual with all the commands needed for users to start scripting independently. We are also available throughout the process to provide support and answer questions.

Image Credit: Phenom desktop: The Thermo Scientific Phenom XL G2 has capacity for up to 36 sample stubs. Together with its fast and reliable stage it is ideal for automation.

Are there any other benefits to using scripting that you would like to highlight?

The most important thing to mention is that automation is not just a black box. Automation does not compromise users’ understanding or education. Users can follow and visualize the whole process of image acquisition and analysis. Users remain in complete control, even though the process is fully automated.

Automation lets the SEM do the work, increasing productivity at every stage. Automation also makes the system accessible because anyone with minimal training can operate automated SEM runs. The process remains consistent once it has been set up.

It also gives users confidence in their data. Instead of gathering just a few images, users can collect large amounts of data, providing a robust statistical basis for their analysis.

To summarize the benefits of scripting:

- Not take precious researcher time

- Allow a higher sample throughput (run at night)

- Make more accurate measurements

- Take a better statistical sample

- Be quicker overall: free up tool time

Ruud Bernsen

Ruud Bernsen is an Applications Specialist for the large volume SEM solutions at Thermo Fisher Scientific. Ruud provides training and product support to our customers around the world. In addition Ruud manages the development of custom made scripting requests for the desktop SEM product range

About Thermo Fisher Scientific

Thermo Fisher Scientific is the world leader in serving science. Through our Thermo Scientific™ brand, we provide innovative solutions for electron microscopy and microanalysis. We offer SEMs, TEMs and DualBeam™ FIB/SEMs in combination with software suites to take customers from questions to actionable data by combining high-resolution imaging with physical, elemental, chemical and electrical analysis across scales and modes - through the broadest sample types.

Disclaimer: The views expressed here are those of the interviewee and do not necessarily represent the views of AZoM.com Limited (T/A) AZoNetwork, the owner and operator of this website. This disclaimer forms part of the Terms and Conditions of use of this website.