Deep learning is set to radically transform the machine vision landscape. It is facilitating new applications and disrupting long-established markets. The product managers with FLIR have the privilege of visiting companies across a wide range of industries; they report that every company they have recently visited is developing deep learning systems.

It’s never been easier to initiate the project, but where to start? This article follows a straightforward framework when considering building a deep learning inference system for less than $600.

What is Deep Learning Inference?

Inference uses a deep-learning-trained neural network to make predictions on the latest data. Inference is much better at answering complex and subjective questions in contrast to conventional rules-based image analysis.

Through the optimization of networks, making them run on low power hardware, inference can be conducted 'on the edge' near the data source. This eradicates the system’s dependence on a central server for image analysis, resulting in lower latency, greater reliability, and enhanced security.

1. Selecting the Hardware

The aim of this guide is to construct a dependable, top-quality system for deployment in the field. While this guide is limited in scope, merging traditional computer vision techniques with deep learning inference can offer high accuracy and computational efficiency by leveraging the advantages provided by each approach.

The Aaeon UP Squared-Celeron-4GB-32GB single-board computer is equipped with the memory and CPU power necessary for this approach. Its X64 Intel CPU runs the same software as conventional desktop PCs, streamlining development in contrast to ARM-based, single-board computers (SBCs).

The code that facilitates deep learning inference utilizes branching logic; exclusive hardware can vastly accelerate the implementation of this code.

The Intel® Movidius™ Myriad™ 2 Vision Processing Unit (VPU) is an extremely powerful and efficient inference accelerator, which has been incorporated into our FLIR’s latest inference camera, the Firefly DL.

Source: FLIR Systems

| Part |

Part Number |

Price [USD] |

USB3 Vision Deep Learning

Enabled Camera |

FFY-U3-16S2M-DL |

299 |

| Single Board Computer |

UP Squared-Celeron-4GB-32GB-PACK |

239 |

| 3m USB 3 cablel |

ACC-01-2300 |

10 |

| Lens |

ACC-01-4000 |

10 |

| Software |

Ubuntu 16.04/18.04, TensorFlow,

Intel NCSDK, FLIR Spinnaker SDK |

0 |

| |

Total $558 |

|

2. Software Requirements

There are a number of free tools available for the construction, training and deployment of deep learning inference models. This project makes use of a wide range of free and open-source software.

Each software package offers free installation instructions on the respective websites. This guide makes the assumption that you are familiar with the fundamentals of the Linux console.

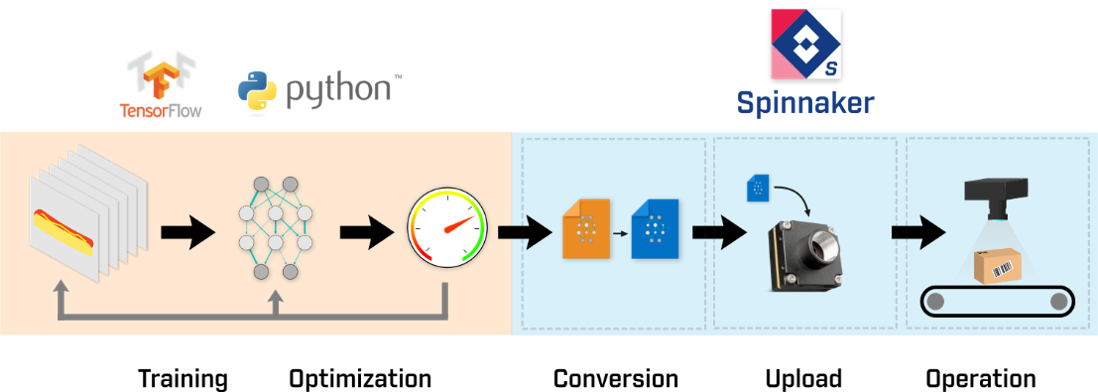

Collect Training

Data |

Train Network

(augmentation optional) |

Evaluate

performance |

Convert to Movidius

graph format |

Deploy to

Firefly DL camera |

Run inference on

captured images |

Figure 1. Deep learning inference workflow and the associated tools for each step. Image Credit: FLIR Systems

3. Detailed Guide

‘Getting Started with Firefly Deep Learning on Linux’ offers an introduction on how to retrain a neural network and convert the ensuing file into a firefly compatible format, as well as how to display the results utilizing SpinView. Users are given the step-by-step process on how to train and convert inference networks themselves using terminal.

This information has been sourced, reviewed and adapted from materials provided by FLIR Systems.

For more information on this source, please visit FLIR Systems.